"Super human AI" is a marketing term, not a technical milestone. The honest 2026 question is narrower: which specific tasks can frontier models do better than the median expert, and which can they not do at all?

The data answers it cleanly. On static benchmarks like ARC-AGI-2, GPT-5.5 hits 85%, GPT-5.4 Pro 83%, Gemini 3.1 Pro 77%. Human baseline is 60%. By that one measure, frontier models exceed human performance.

Switch to ARC-AGI-3, which adds interactivity and novel reasoning, and the same models score under 1%. Humans still score 100%.

That gap is the entire 2026 story. Frontier AI is super-human at specific narrow tasks and decisively below human on others. Below is what is real, what is marketing, and what to actually do with it.

Where frontier AI is super-human in May 2026

| Capability | Status | Source |

|---|---|---|

| ARC-AGI-2 (static visual reasoning) | 85% (GPT-5.5), human baseline 60% | ARC Prize leaderboard |

| SWE-bench Verified (coding) | 87.6% (Claude Opus 4.7) | Anthropic |

| Long-context retrieval (1M tokens) | Solved on Claude Sonnet 4.6, Opus 4.7 | Anthropic |

| Single-shot draft writing | Faster than human, comparable quality on most tasks | Daily use |

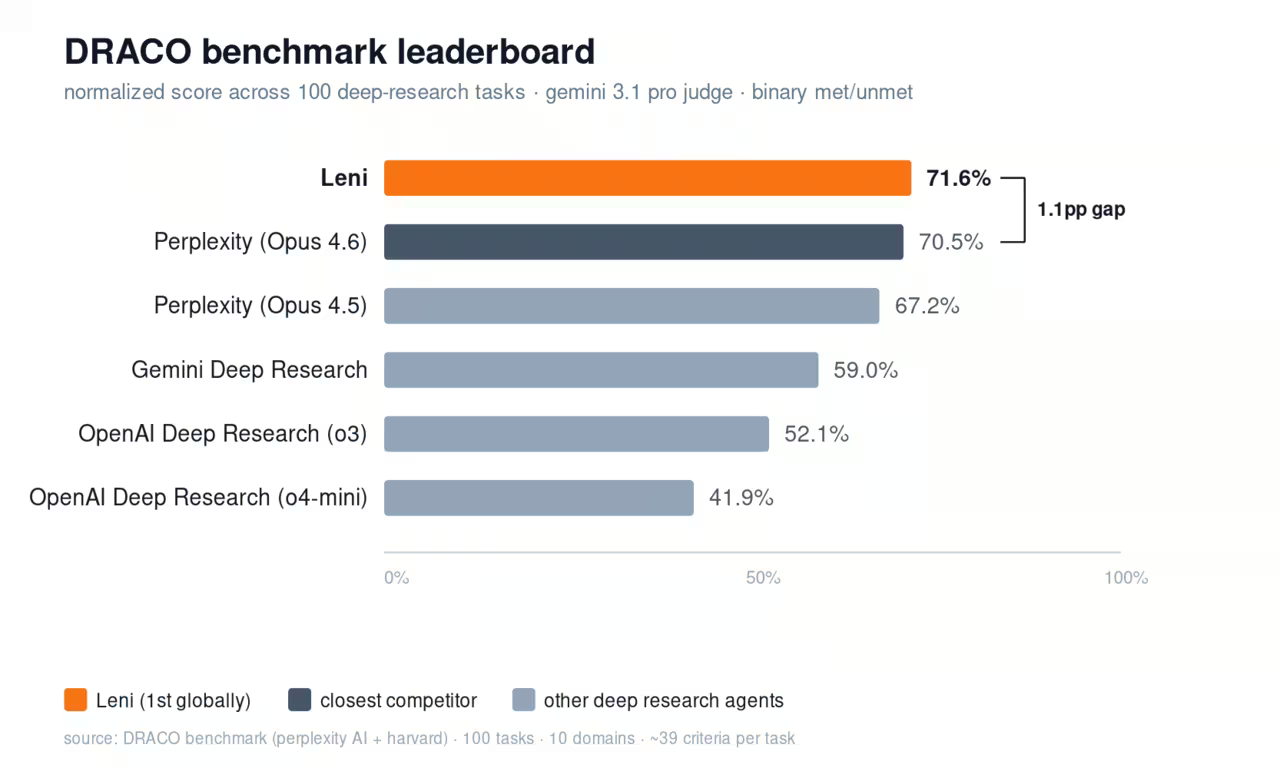

| Cited research synthesis (Perplexity Pro) | Faster than human analyst on bounded queries | Personal benchmark |

These are not theoretical. A team can deploy frontier models on these workflows today and beat human-only baselines on speed, often on quality.

Where frontier AI is decisively not super-human

| Capability | Status |

|---|---|

| ARC-AGI-3 (novel interactive reasoning) | <1% (frontier models), 100% (humans) |

| Long-horizon agentic tasks (>30 min) | Reliable failure mode |

| Original scientific discovery without scaffolding | Not demonstrated |

| Robust real-world embodiment | Limited to specific tasks |

| Trustworthy unsupervised execution on consequential decisions | Not safe to deploy |

The ARC-AGI-3 result is the cleanest signal of the gap. Same models, slightly different task structure (novel and interactive instead of static), and performance collapses by 80+ points.

Anyone telling you frontier AI is one model away from AGI is reading ARC-AGI-2 and ignoring ARC-AGI-3. Both benchmarks are run by the same team.

What changed in 2025-2026

Three real developments matter:

Capability speed: Claude went from 8.6% on ARC-AGI-2 (Opus 4, May 2025) to 68.8% (Opus 4.6, Feb 2026) in nine months. That is faster than any prior twelve-month period in AI capability. Whether this rate continues is the unknown that drives the safety policy debates.

Anthropic's RSP v3.0: Took effect Feb 24, 2026. The "responsible scaling policy" that previously committed to a categorical training pause if specific capability thresholds were crossed was replaced with a dual-trigger model. Pause now requires both a "race-leadership" condition and a "material catastrophic risk" condition. This is the first time a frontier lab formally abandoned a hard-stop commitment.

EU AI Act enforcement: General-purpose AI obligations applied from Aug 2, 2025. Commission enforcement powers against GPAI providers come into force Aug 2, 2026. The compliance work for any AI vendor selling into the EU is now real, not theoretical.

OpenAI's tier system, by current consensus

OpenAI laid out a 5-tier framework:

- Level 1 Chatbots: Conversational AI. Reached 2022.

- Level 2 Reasoners: AI that solves problems at human-PhD level. Reached 2024-2025.

- Level 3 Agents: AI that takes actions over extended time horizons. Where frontier labs sit in mid-2026, partially.

- Level 4 Innovators: AI that helps with novel scientific discovery. Not reached.

- Level 5 Organizations: AI that runs entire organizations. Not reached.

Industry consensus places GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro at Level 3 in narrow agentic tasks (coding agents, research agents, browser agents) but not in robust long-horizon execution.

Practical implications

Three things to do with this in 2026:

Deploy frontier AI on bounded, super-human tasks: Coding assistance, research synthesis, draft writing, structured data extraction, long-document Q&A. These are real productivity wins. Most teams under-use AI on tasks where it already exceeds human performance.

Do not deploy frontier AI on long-horizon unsupervised execution: Multi-day autonomous projects, consequential decisions without human review, novel reasoning under pressure. The failure modes are real and not yet predictable.

Watch ARC-AGI-3 results, not ARC-AGI-2: ARC-AGI-2 performance is now saturating (which is why it became a benchmark). The interesting frontier is ARC-AGI-3 and successor benchmarks designed for novel interactive reasoning. Track those.

What "AGI" means in 2026 (the honest version)

There is no consensus definition. The two camps:

Capability-based: "AGI is AI that can do any cognitive task a human can do at human level." Under this definition, frontier AI is not AGI in May 2026 and ARC-AGI-3 results suggest it is not close.

Economic: "AGI is AI that can do most economically valuable work." Under this definition, AI is closer because many specific jobs already include AI substitutes. But "most" is where the disagreement lives.

Either way, "super human AI" as a single label is too vague to use. The useful framing is: where is it super-human, where is it not, and what should I deploy?

FAQ

Has any AI passed the AGI test in 2026?

No, depending on the test. Frontier models exceed human baselines on ARC-AGI-2, SWE-bench Verified, and most static reasoning benchmarks. They score under 1% on ARC-AGI-3, which adds interactivity and novelty. There is no single AGI test that has been passed.

What is the difference between super-human AI and AGI?

"Super-human AI" usually means AI that exceeds human performance on some specific task. "AGI" means AI that can do any cognitive task at human level. Frontier AI is super-human on narrow tasks and not AGI by the broader definition.

Are AI safety policies being rolled back?

Anthropic's RSP v3.0 (Feb 2026) replaced its categorical training pause with a dual-trigger model, the first formal step away from a hard-stop commitment by a frontier lab. EU AI Act enforcement is tightening, not loosening. The picture depends on jurisdiction and lab.

Should businesses adopt AI now or wait for AGI?

Adopt now on bounded, narrow tasks where AI already exceeds human performance (coding, research, drafting, long-doc analysis). Do not wait for AGI to deploy productivity gains that exist today.

What is the most realistic 2026 use case for frontier AI?

Augmenting expert knowledge work where the human verifies the output. Coding with Claude/Codex, research with Perplexity, writing drafts with ChatGPT. Productivity gains of 30-50% are common. Full automation of expert work is not.

Sources and further reading

- historic account of Deep Blue’s win

- Coursera’s overview of AI history

- AI for MUN students

- OpenAI’s Superhuman case study

- guide to AI art

- AI Futures on career implications of superhuman systems

Stop overpaying for AI tools you barely use. See how Dupple X helps your team adopt AI without the bloat.