You probably have three tabs open right now. One for research, one for drafting, one for a long PDF or messy codebase. The friction is not whether AI helps. It does. The friction is picking the right model before you waste 20 minutes correcting the wrong one.

I run all three platforms daily. Perplexity, ChatGPT, and Claude solve different problems. Treating them as interchangeable is how you end up with mediocre output from all of them. Mapping each to a specific job is how you get good results faster.

Below is what each one is best at, what they cost in May 2026, where they break, and a decision tree at the end so you can pick fast.

Quick comparison

| Tool | Best for | Pro tier | Latest model | Context window |

|---|---|---|---|---|

| Perplexity Pro | Live web research with citations | $20/month | Routes Sonar, GPT-5.5, Claude Sonnet 4.6, Gemini 3.1 Pro | Per-model |

| ChatGPT Plus | Broad daily work, drafting, voice | $20/month | GPT-5.5 (Apr 23, 2026) | 1M tokens (API) |

| Claude Pro | Long-document reasoning, coding | $17/month annual ($20 monthly) | Claude Opus 4.7 / Sonnet 4.6 | 1M tokens (GA) |

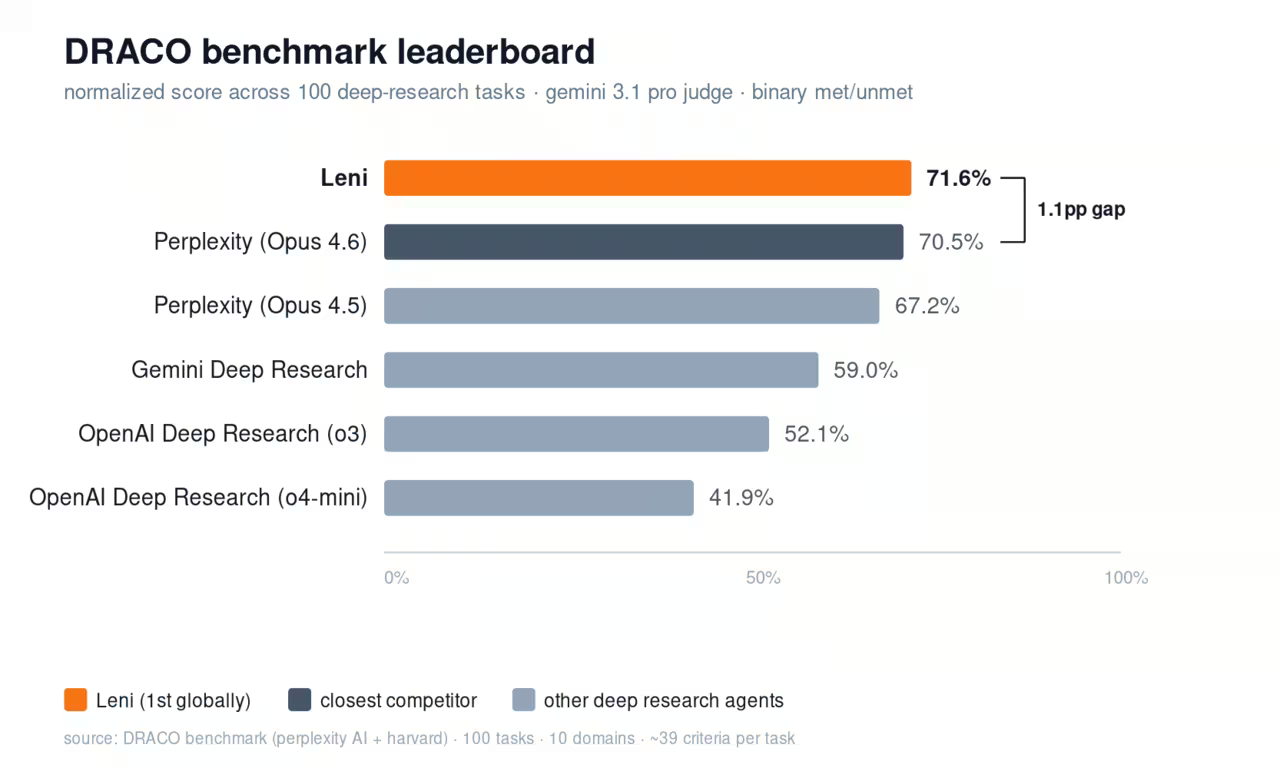

Perplexity is the answer engine

Perplexity is built around one job: give you a current, sourced answer. Citations are not an extra step. They are the product.

That changes the math when you do research-heavy work. A sourced answer goes straight into a brief. An unsourced one creates a second task: verify it. Perplexity skips that second task most of the time.

In May 2026, Perplexity Pro routes prompts across multiple frontier models including Sonar (Perplexity's own), GPT-5.5, Claude Sonnet 4.6, and Gemini 3.1 Pro. The Max tier ($200/month) adds Claude Opus 4.7, the Comet browser on macOS/Windows/iOS/Android, and Sora 2 Pro for short video clips. Education is $10/month if you have an .edu address.

Where it wins:

- Citations on every answer. Faster verification than ChatGPT or Claude.

- Pro Search runs multi-step research and synthesizes from many sources.

- Spaces store custom-instructed research collections per project.

- Comet browser handles multi-tab workflows while you read.

Where it falls short: Citations are not a guarantee of accuracy. One 2026 audit found roughly 37% of source attributions can misattribute (single secondary source, treat as directional). Always click through for high-stakes claims. Long-context reasoning is also weaker than Claude. If you need to hold a 200-page document in working memory, Perplexity is not the right tool.

ChatGPT is the generalist

ChatGPT is what most people open first because it handles everything reasonably well. Drafting, brainstorming, code, voice conversations, image generation, agents. The flagship as of April 23, 2026 is GPT-5.5, with a Pro variant for harder questions.

That breadth is the strength and the weakness. It rarely beats Claude on pure long-document reasoning or Perplexity on cited research, but it is usually close enough that switching tools is not worth the friction.

Pricing in May 2026:

- Free: $0 (with ads)

- Go: $8/month (with ads in US)

- Plus: $20/month

- Pro: $100/month (added April 9, 2026) or $200/month original Pro

- Business: $20/seat/month annual ($25 monthly)

- Enterprise: custom

Where it wins:

- Voice mode is the best in the category. Real conversations, not push-to-talk.

- Image generation via ChatGPT Images 2.0 is included on Plus and up. Sora was discontinued March 24, 2026, so video gen is no longer a ChatGPT differentiator.

- Codex with full Pro limits, Deep Research, and Agent Mode on the higher tiers.

- The most polished consumer-grade UX of the three.

Where it falls short: No built-in citation discipline. You will catch fluent answers that are wrong. Long-context handling improved with GPT-5.5 but still trails Claude on document-heavy reasoning. The dual-Pro pricing ($100 vs $200) is also confusing. Most teams should ignore Pro and stick with Plus or Business.

Claude is the deep-work machine

Claude's structural advantage shows up the moment your prompt includes a long document or a complex codebase. Claude Opus 4.7 (April 16, 2026) and Sonnet 4.6 both ship with a 1M token context window in GA, no beta header required. Sonnet 4.6 has 300k max output. Opus 4.7 has 128k max output.

That context size changes what you can ask. Summarizing a 10-page article is easy for any model. Holding a full strategy deck plus 6 months of support tickets plus a product requirements document and tracing contradictions across them is a different task. Claude handles it. ChatGPT loses threads. Perplexity is built around retrieval, not in-context analysis.

Pricing (claude.com/pricing, verified):

- Free: $0

- Pro: $17/month annual ($200 upfront) or $20 monthly

- Max: from $100/month (5x usage), $200/month (20x usage)

- Team Standard: $20/seat/month annual ($25 monthly), 5 seat minimum

- Team Premium: $100/seat/month annual ($125 monthly)

- Enterprise: $20/seat plus usage, custom contracts

Where it wins:

- Best published 2026 SWE-bench Verified score: Opus 4.7 at 87.6%, Sonnet 4.6 at 79.6%. GPT-5.3 Codex landed at 85%, GPT-5.5 at 82.6% on the standard variant.

- 1M token context out of the box on the latest models.

- Computer Use in research preview lets Claude drive native apps via screenshots and mouse control.

- Projects, Remote Control across phone and desktop, /loop scheduled tasks for autonomous work.

- Claude Design generates prototypes, slides, and visual outputs natively.

Where it falls short: Higher hallucination rate than Perplexity on grounded-summarization benchmarks. Vectara's late-2025 leaderboard pegged Claude Opus around 10% and Sonnet around 4.4% on its harder enterprise tests, with reasoning-mode runs above 10% across all frontier models. Real-time web access is also weaker than Perplexity. If your work needs current data, Claude needs help.

How they compare on actual jobs

I ran the same 4 prompts through all three platforms last week. Here is what stood out.

Research with current data: Perplexity wins by default. ChatGPT now grounds many answers in web search but does not show its sources by default. Claude grounds least. For a fact-checked brief, Perplexity halves my time.

Long-document reasoning: Claude wins. I dropped a 180-page customer interview corpus into Claude Sonnet 4.6 and asked for contradictions across themes. The answer held context end-to-end. ChatGPT lost detail past 60 pages. Perplexity refused the document size.

Coding: Claude Opus 4.7 leads on benchmarks (87.6% SWE-bench Verified). In practice, ChatGPT and Claude both write good code. The gap shows on multi-file repo refactors and complex debugging where Claude holds more context.

Drafting and tone: ChatGPT is the smoothest first draft. Claude writes more carefully but slower. Perplexity is not built for this.

Voice: ChatGPT only. The other two do not compete here.

Multi-modal output: Image generation is in ChatGPT (and now bundled into Plus). Sora is gone from OpenAI as of March 24, 2026. Perplexity Max routes to a Sora 2 Pro video product. Claude Design produces visual prototypes and slides.

How to choose

If you only buy one, here is the call:

If your job is research-heavy: Perplexity Pro at $20/month. Pair with ChatGPT or Claude for the writing step.

If your job is mixed knowledge work: ChatGPT Plus at $20/month. It is the safest default and the best voice mode.

If your job is reading long documents or shipping code: Claude Pro at $17/month annual. Add the Computer Use preview if you build agents.

If you can run two: Perplexity Pro ($20) plus Claude Pro ($17) covers research and reasoning for $37/month. That stack outperforms any single tool for professional work.

If you can run three: Add ChatGPT Plus ($20) for voice and the smoothest UX. Total $57/month per seat.

The mistake I see most often is paying for ChatGPT Pro at $200/month thinking it unlocks something the rest do not. For most professionals it does not. The Plus tier covers 95% of the work.

FAQ

Which AI is best for professional research in 2026?

Perplexity Pro. Citations are baked into every answer, sources are clickable, and Pro Search runs multi-step queries that approximate a junior analyst. ChatGPT and Claude both ground in web data but neither shows sources by default.

Is Claude Opus 4.7 better than GPT-5.5 for coding?

On SWE-bench Verified, yes. Opus 4.7 scored 87.6%, GPT-5.3 Codex landed at 85%, GPT-5.5 at 82.6% on the standard variant. In daily use, Claude holds more repo context per turn. ChatGPT is faster on small one-shot tasks. Most teams use both.

Why would I pay $200/month for ChatGPT Pro or Claude Max?

Only if you hit usage caps on Plus or Pro and your work justifies the cost (heavy Codex use, long Deep Research sessions, daily long-document reasoning). For typical professional work, the $20 tiers are enough.

Can Perplexity replace ChatGPT?

For research, often yes. For writing, voice, image generation, and agent-style task completion, no. Perplexity is built as an answer engine, not a general assistant.

How do I stop overpaying across these tools?

Audit usage every quarter. If a tier has fewer than 3 active sessions per week, downgrade or cancel. Most professionals pay for capacity they never touch. Tools that prove their value will earn the renewal.

Sources and further reading

- Tactiq comparison of ChatGPT, Perplexity, and Claude

- Anthropic API overview

- ClickForest AI tools comparison

- optimizing AI model costs and latency

- boost your business with AI

- LLMrefs analysis of AEO across ChatGPT, Claude, and Perplexity

Stop overpaying for AI tools you barely use. See how Dupple X helps your team adopt AI without the bloat.