The 2025 DORA report killed the comfortable narrative that AI assistants automatically improve developer productivity. The data shows a sharper picture. AI raised individual output by 21% on tasks completed and 98% on PRs merged. It also raised time-in-PR-review by 441% and incidents per PR by 242.7%. Teams ship more, but the rework rate exploded. See Jellyfish metrics framework for more. See Jellyfish on improving developer productivity for more. See Octopus on developer productivity for more. See automation in DevOps for more. See Zenhub guide to developer productivity for more. See IBM on developer productivity for more.

This is the AI Productivity Paradox. AI is a multiplier, not a fix. It amplifies whatever culture, CI/CD, and platform engineering are already in place. Teams with strong foundations see real gains. Teams without them ship faster broken code. Below is what the 2026 DORA benchmarks look like, which AI tool to pick, and the team practices that actually move the needle. See Build a High-Performing Team of Developers for more.

Quick reference: 2026 DORA elite-tier benchmarks

| Metric | Elite tier | High | Medium | Low |

|---|---|---|---|---|

| Deployment frequency | Multiple per day | Once per day to once per week | Once per week to once per month | Less than monthly |

| Lead time for changes | Less than 1 hour | 1 day to 1 week | 1 week to 1 month | More than 1 month |

| Mean time to restore (MTTR) | Less than 1 hour | Less than 1 day | 1 day to 1 week | More than 1 week |

| Change failure rate | 0-15% | 16-30% | 16-30% | 31-45% |

| Rework rate (5th metric, 2025) | Tracked separately |

Only 9.4% of teams hit elite-tier deployment frequency in 2026.

What changed about productivity in 2025-2026

Three shifts you cannot ignore:

1. AI adoption hit 90%: Median 2 hours per day on AI coding tools. 80%+ of developers report productivity gains. The tools are no longer optional.

2. The AI Productivity Paradox: Individual output up sharply, organizational delivery often flat. Teams ship more code but spend longer in code review, hit more incidents, and accumulate more technical debt.

3. DORA added rework rate as 5th metric (2025): Formalized the AI tradeoff. Rework rate measures how often shipped code has to be re-shipped due to bugs or revisions. AI tools push this number up if not paired with strong CI/CD and review practices.

The implication: buying Cursor or Claude Code does not improve productivity. It amplifies whatever foundation exists. Teams with weak CI/CD, weak code review, and weak platform engineering ship faster broken code with AI.

Pick the right AI coding assistant

Four tools dominate in 2026:

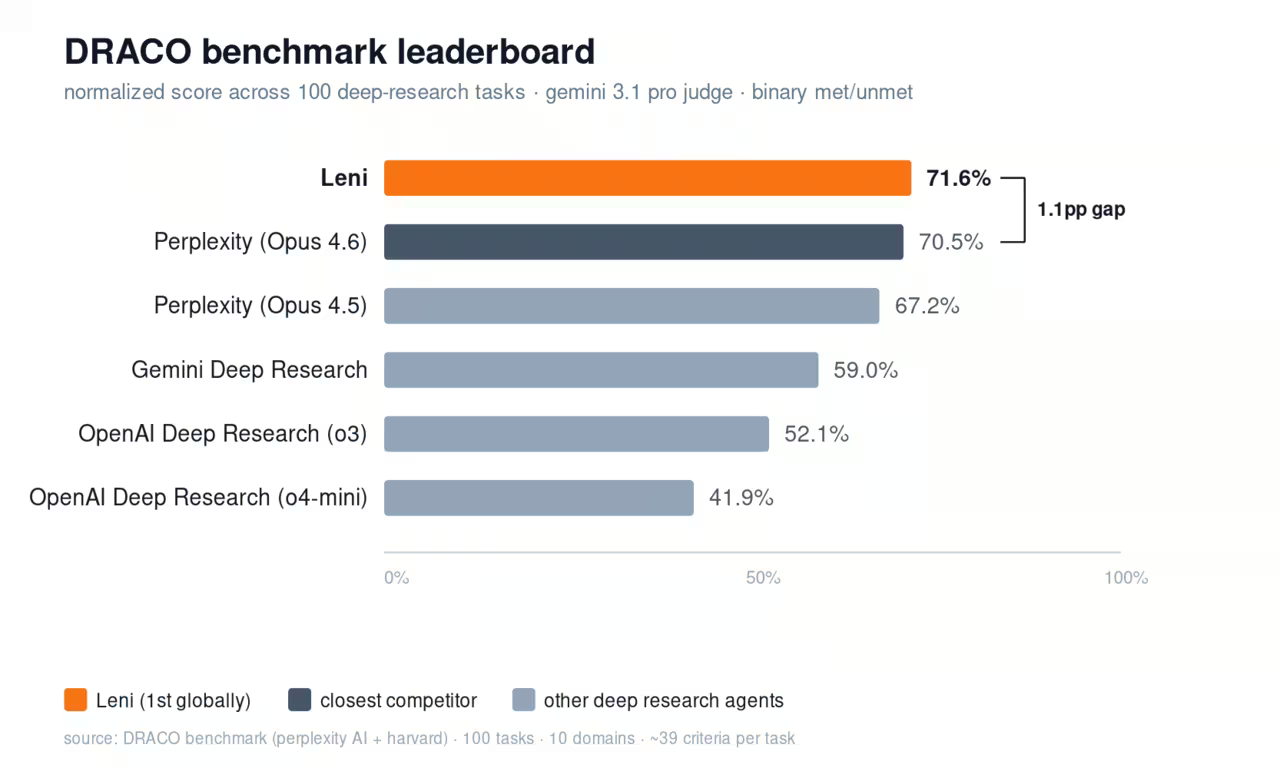

GitHub Copilot Pro ($10/month, 300 premium requests, Claude Opus 4.6 access; Business $19/seat): Most polished editor integration. Best when your team is already deep in GitHub. The default for most enterprises.

Cursor Pro ($20/month; Pro+ $60; Ultra $200): The fork of VS Code that makes AI a first-class editor citizen. Multi-file context, agent mode, and the best UX for AI-driven code editing. The choice for teams that want AI to do more than autocomplete.

Windsurf Pro ($15/month, 500 credits; Teams $30; Max $200): Cursor's main competitor. Cascade agent mode, Flow technology that maintains context across files. Strong on agentic workflows.

Claude Code Pro ($17/month; Max $100+): CLI-first, deep integration with Claude Opus 4.7. Best for terminal-native developers and long-context refactoring. Different paradigm from Cursor's editor model.

The mistake I see: paying for two of these stacked on the same engineer. Pick one as the primary daily driver. Adding Copilot autocomplete on top of Cursor is rarely worth $10/month.

The 2026 default I would recommend: Cursor Pro at $20/month for active developers, Claude Code for CLI-heavy work and long refactors. GitHub Copilot Business if your org needs SOC 2 compliance and Microsoft enterprise contracts.

Team practices that actually move DORA metrics

Three practices that move the needle, with or without AI:

1. Trunk-based development with feature flags: Ship to main multiple times per day. Hide unfinished work behind feature flags. The single biggest unlock for deployment frequency and lead time. Without this, AI assistants help individual velocity but not org delivery.

2. Strong CI/CD with fast test feedback: Sub-15-minute CI on PRs. Sub-1-hour deploy pipeline to production. AI tools amplify what CI/CD already does. Slow CI plus AI equals more broken commits in flight.

3. Platform engineering investment: Internal developer platforms (IDPs) that handle environments, deploys, observability, and on-call routing. The 2026 DORA report shows platform engineering is now the differentiator between teams that benefit from AI and teams that ship faster broken code.

What does not move the needle: stand-up frequency tweaks, story-point recalibration, ticket grooming process changes. These are productivity theater. Deploy frequency, lead time, MTTR, and change failure rate move when foundations improve.

How to measure the AI productivity tradeoff

Three practical metrics to add to your dashboard:

Time in PR review: If this is rising while deployment frequency is also rising, AI is producing more PRs but reviewers cannot keep up. Either increase reviewer capacity or slow down the AI throughput.

Incidents per deploy: If this is rising, change failure rate is going up. AI is shipping more bugs. Tighten CI tests or slow down.

Rework rate: How often shipped code is re-shipped within 30 days. The DORA 5th metric. Rising rework is the clearest signal AI is shipping fast and breaking.

The right tradeoff varies by team. A startup willing to break things to ship may accept higher rework. A regulated team cannot. Pick the metric that matches your context.

What "elite" actually requires in 2026

Elite-tier teams in 2026 share five practices:

Deployment frequency multiple times per day: Requires trunk-based development, feature flags, and full CI/CD automation.

Lead time under 1 hour: From commit to production. Requires sub-15-minute CI and a deploy pipeline measured in minutes, not hours.

MTTR under 1 hour: Requires strong observability, runbooks for common failures, and on-call rotations that can act fast.

Change failure rate under 15%: Requires test discipline, code review quality, and feature flags to limit blast radius.

Strong platform engineering: Internal developer platforms that abstract away environment setup, deploys, and on-call routing.

Only 9.4% of teams hit this. Most teams are mid-tier. AI does not move you from medium to elite. The foundations do.

What to do this quarter

If you want measurable productivity gains in 90 days:

Week 1-2: Pick one AI tool as the team default (Cursor or Copilot). Standardize. No multiple stacked tools per engineer.

Week 3-6: Audit CI/CD pipeline. Sub-15-minute CI is the target. Investigate the slowest tests, parallelize, fix flakiness.

Week 7-10: Implement feature flags if you do not have them. Move toward trunk-based development.

Week 11-13: Measure DORA metrics weekly. Establish baseline. Set realistic targets for the next quarter.

This is unsexy work. It is also what actually moves the needle. AI tools alone do not.

FAQ

Does GitHub Copilot actually improve productivity?

It improves individual output (21% more tasks completed, 98% more PRs merged per the 2025 DORA report). Organizational delivery often stays flat because rework rate and incidents per PR go up. AI is a multiplier of existing CI/CD and platform engineering, not a fix.

Cursor vs GitHub Copilot vs Claude Code: which should I use in 2026?

Cursor Pro at $20/month for most active developers. Copilot Business at $19/seat if your org needs Microsoft enterprise compliance. Claude Code at $17/month for CLI-heavy work and long refactors. Pick one as the primary tool, do not stack.

What are good DORA metrics for a 2026 startup?

Deployment frequency at least daily. Lead time under 1 day. MTTR under 4 hours. Change failure rate under 25%. These are high-tier benchmarks. Elite tier (multiple deploys/day, lead time under 1 hour) requires platform engineering investment most startups have not made.

What is the DORA 5th metric?

Rework rate. Added in 2025. Measures how often shipped code is re-shipped within 30 days due to bugs or revisions. AI coding assistants tend to push this number up if not paired with strong code review and CI/CD.

How do I know if AI is helping or hurting my team's productivity?

Track time in PR review, incidents per deploy, and rework rate alongside deployment frequency. If deployment frequency rises but the other three also rise sharply, AI is shipping faster broken code. The fix is CI/CD and review process, not removing AI.

Stop overpaying for AI tools you barely use. See how Dupple X helps your team adopt AI without the bloat.